While machine learning technologies have been part of our lives for a while, the subject of Generative AI is seemingly now everywhere. This wave of public interest can be credited to easily accessible tools (such as Stable Diffusion and ChatGPT), that allow users to create convincingly ‘real’ creative imagery and text by using simple prompts.

The hype isn’t unfounded either; you can lose yourself for hours building fantastical imagery on Midjourney, and who hasn’t used ChatGPT to write a cheeky bit of copy, overcome writer’s block, or see what Shakespeare sounds like recited in the style of Snoop Dogg?

The potential of these tools is enticing, but are they suitable for public facing, social experiences? At Imagination Labs we’ve been conducting explorations to find out, and there is a huge red flag to address – bias.

Machine learning models, while remarkable in their ability to process vast amounts of data, inherit the biases present within their training datasets. Try searching ‘racing driver’ on Getty images and the results are overwhelmingly white and male; this is the kind of data from which these large language models have learned.

The risk of using AI in public facing experiences is that without careful curation of output, a brand could be seen to be perpetrating harmful stereotypes or disproportionately favouring certain genders or racial groups.

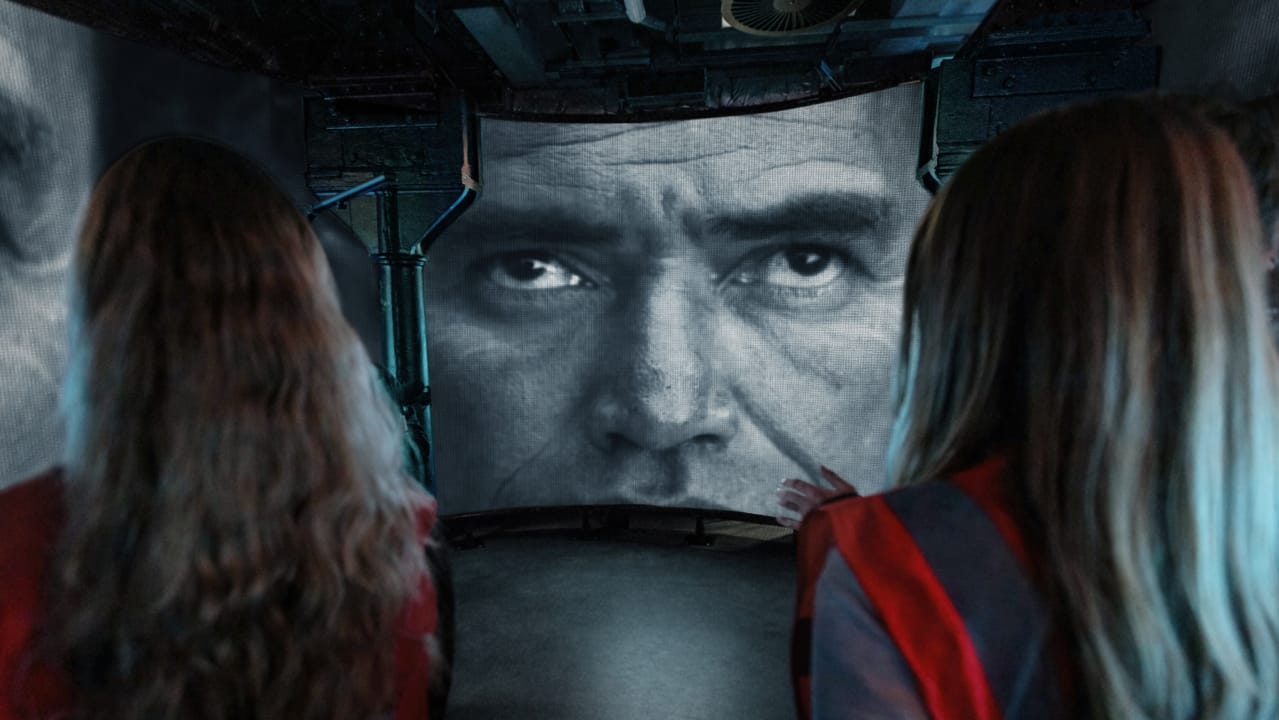

For example, we set up a pipeline to see if AI could transform a photograph into a fictional character. For the purposes of the experiment, it was to see if AI could be used to turn someone into The Terminator, an excellent choice considering the nature of the project. By taking a photograph of participants and uploading that via a stable diffusion API, a visual morph effect was created that turned people into a fictional AI killing machine, using non-fictional AI.

So far, so robot. What could possibly go wrong when experimenting with a model that promised to turn you into a character from a popular computer game, Grand Theft Auto? Well, unfortunately, the darker your skin tone, the more likely you would be transformed into a ‘gangster’. This is a glaring example of bias where the system has assumed a societal role based on someone’s appearance, and perpetuated negative stereotypes. We also discovered, using a number of different models, that people were often transformed into a woman or a man, though it was often not obvious why it did this.

The problem is with the data set – it is the data that is driving the bias, not the Large Language Model (LLM) itself. LLMs are a mirror of our existing societal biases which exist at multiple levels – historical inequity of race and gender, the language we use (and have used) in books and online.

As more businesses look to improve their diversity and inclusion this is particularly problematical. Just as internal D&I boards and leaders exist to ensure businesses maintain an ethical and inclusive culture, so organisations also have a responsibility to moderate any creative work they output, whether it’s a traditional photoshoot or something generated by AI.

Curation, programming and oversight should be deeply embedded in the development and deployment of AI systems, and this is one of the drivers for us conducting research and building proprietary pipelines to combat the ignorance of the machines. This includes building a library of prompts that ensure diversity of outputs, training our models with verified unbiased data, and setting up a rigorous Q&A system to ensure anything generated by AI adheres to our standards and those of the brands we work with.

Businesses need to think carefully about this and one way is to establish Gen AI Principles for all teams, so it can enhance creativity while being aware of the risks involved as well as the opportunities.

The data put into prompts is as important as the output. This can include specifically asking the prompts to remove bias or to include ranges of gender, ethnicity and age in the output. Good data in, good data out.

By combining the power of artificial intelligence with human intuition, we can create innovative experiences that are not only data-driven but also socially conscious and respectful.

This article first appeared in Creative Brief.