Brands are looking to create ever more spectacular and inventive brand experiences in their bid to delight and thrill existing – and potential new – customers.

To do this, they have explored interactive environments across a multitude of platforms, with cues and inspiration ranging from immersive theatre to behavioural science and everything in between. But now the process of designing these experiences is being transformed by applying gaming technology to help craft and envisage brand spaces.

Moving from 2D drawings to 3D concepts has always involved significant time, effort, resources and imagination. But by using real-time software, like Unreal Engine, designers can apply the techniques of the gaming and film industry to experience design. This opens multiple opportunities, not least that marketers and brands can more actively engage and input into the design conversation as it is unfolding.

It brings a new level of collaboration to the process as 3D visualisation is brought into the design stages, making it more accessible as well as collaborative – which leads to better quality design solutions.

Designing within Unreal means the design team can create photorealistic renders (the software-generated image from a computer model of a design) that can be navigated and manipulated in real time – materials can be changed, lighting can be altered, weather adjusted or a different time of day chosen. This can all be done while discussing the design with clients and the wider design team, which is game-changing for brands and marketers. The result is more meaningful interactions, discussion and debate, which has a clear benefit to the final design output.

Traditionally, environmental designers would use two drawing packages, one for CAD (computer-aided design) technical drawings and the other for visualisation. The design team would need to draw plans, elevations and detailed drawings for contractors within a CAD package and then create the same drawings in 3D within a traditional rendering program. Effectively doing the same job twice.

New levels of interactivity

Being part of a multidisciplinary design team working on sensory experiential projects, this technology platform allows the multitude of inputs to be combined and tested. Unreal enables more interactive and immersive experiences within the 3D visualisation environment compared with physical prototypes – which are brought to life in the final construction.

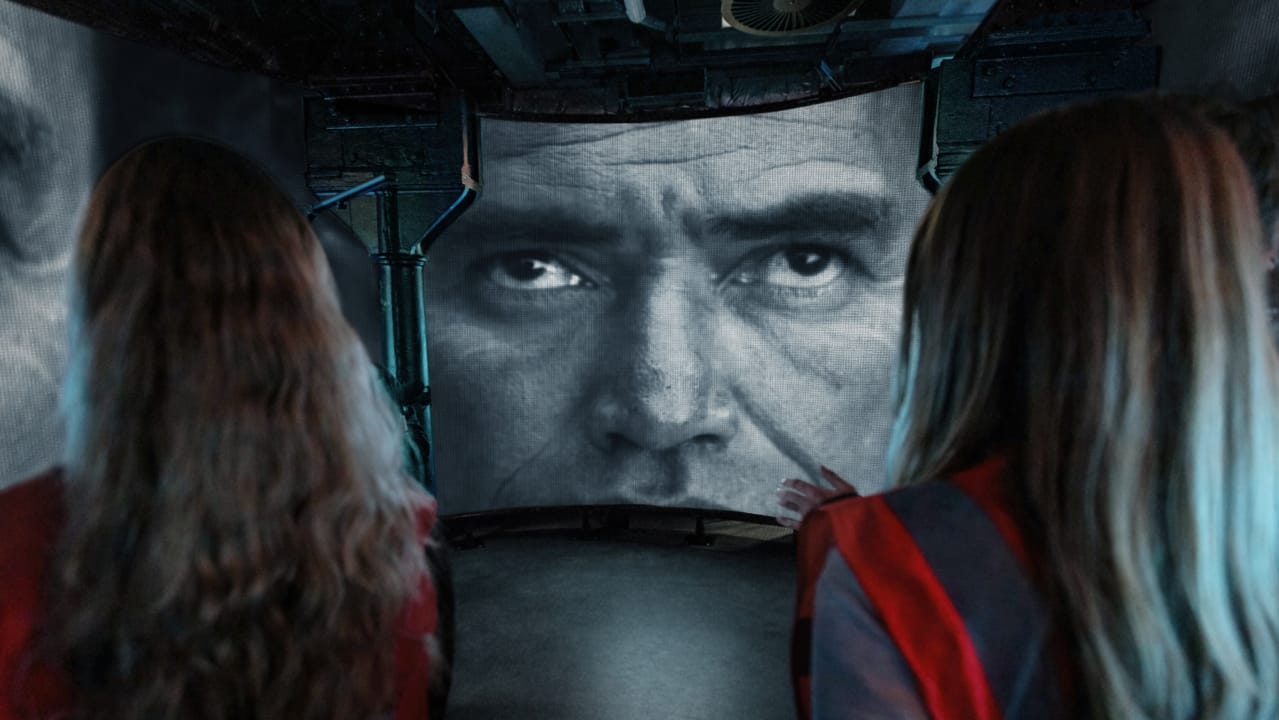

So how has this been used? When briefed by MLB to hit baseballs into Trafalgar Square our first thought was how do we protect the priceless architecture, keep everyone safe and how do we create a ballpark within the limited space? So for MLB and its London Series Trafalgar Square takeover, we invented a game built in Unreal “Home Run Derby X The Cage” which was triggered by hitting balls inside a physical batting cage. It was possible because our creative team could easily collaborate with our game designers. Interchanging ideas, the multidisciplinary team spoke the same language and merged the physical and digital environments.

As influencers and members of the public took their turn in the batting cage, the iconic physical surroundings came alive within the game on the huge LED screen at the foot of Nelson’s Column. The latest ball tracking technology measured the speed and trajectory of the physical ball, this data was fed directly into Unreal Engine giving the hitter real-time visual feedback combining the physical and digital. Animations were triggered and the players were rewarded with extra points for home runs, hot streaks and target strikes.

Despite recent reports of Meta’s VR and AR branch, Reality Labs, losing $21bn since last year, and its heavy investment in the metaverse being scrutinised, VR is still a powerful tool for designers.

Many brands have made their experiences more accessible to those unable to attend in person, by outputting digital twins through Unreal Engine on to social platforms and VR to bring fans and communities closer to the passion and excitement of the live experience. In the future, we hope to build these environments within VR and mixed reality, where multiple creatives contribute and engage with one another within the mixed reality world.

There is a democratisation of the process with this technology. It allows designers and clients to share thoughts and ideas within the design process, so even people who are not inherently able to translate design plans can now become part of the conversation. They can be involved in real-time modifications, leading to better-quality concepts and executions, which is a huge benefit for marketers bringing their brands to life with experiences.

This article first appeared in Campaign written by, Colin Rivett, Head of 3D from our London studio.